Why Should You Use a Web Scraping and CAPTCHA Solving Service?

Answer

Using a web scraping and CAPTCHA solving service simplifies data extraction by handling proxies, JavaScript rendering, and security challenges automatically. It reduces development time, improves success rates, and allows you to scale scraping operations without managing complex infrastructure or constantly adapting to website protection changes.

Detailed Explanation

Modern web scraping is no longer just about sending HTTP requests and parsing HTML. Websites actively deploy advanced security management systems such as rate limiting, browser fingerprinting, IP blocking, and CAPTCHA challenges to prevent automated access. These protections make building and maintaining a reliable scraping system significantly more complex.

A managed scraping or automation service acts as an abstraction layer between your application and the target website. Instead of manually configuring proxies, handling dynamic JavaScript rendering, or solving CAPTCHA challenges, the service handles these tasks automatically and returns structured data. This drastically reduces engineering overhead and improves reliability.

Additionally, websites frequently update their detection mechanisms, which can break custom-built scrapers. Maintaining such systems requires continuous monitoring and updates. By using a specialized solution, these updates are handled externally, allowing developers to focus on data processing rather than infrastructure maintenance.

At scale, challenges like IP bans, request blocking (403/429 errors), and CAPTCHA interruptions become the primary bottlenecks. These issues are not trivial to solve and often require a combination of proxy rotation, browser emulation, and intelligent request handling to maintain access.

Solutions / Methods

- Build a custom scraping infrastructure:You can develop your own system using headless browsers, proxy pools, and CAPTCHA solvers. While flexible, this approach requires significant time, ongoing maintenance, and expertise in anti-detection techniques.

- Use a managed scraping API:A scraping API abstracts complexity by handling proxy rotation, JavaScript rendering, and retry logic. This allows developers to focus on extracting and processing data rather than managing infrastructure.

- Integrate automated CAPTCHA solving services:Solutions like CapSolver can help handle challenges such as reCAPTCHA, Cloudflare Turnstile, and image-based CAPTCHAs. By combining CAPTCHA solving with security challenge handling strategies, you can maintain high success rates and uninterrupted automation workflows.

Best Practice / Tips

- Combine multiple techniques (proxies, browser fingerprinting, and CAPTCHA solving) for better success rates.

- Prefer session-based IP rotation instead of per-request switching to mimic real user behavior.

- Monitor response codes and detection signals to adapt scraping strategies dynamically.

- Use structured logging to identify failures caused by security management systems.

👉 Related:

CapSolver FAQ — capsolver.com

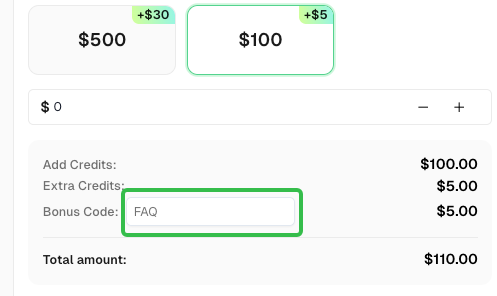

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.