What Are the Main Challenges in Web Scraping and How to Overcome Them?

Answer

Web scraping faces several key challenges, including security protections such as CAPTCHA, IP blocking, dynamic website structures, and data accuracy issues. These obstacles disrupt automation workflows and data reliability. To overcome them, developers use rotating proxies, headless browsers, and automated CAPTCHA-solving tools such as CapSolver to maintain stable and scalable scraping operations.

Detailed Explanation

Web scraping has become essential for data-driven applications, but modern websites actively deploy defensive mechanisms to prevent automated access. One of the most common barriers is CAPTCHA, designed to distinguish human users from bots. Advanced systems now analyze behavior patterns, browser fingerprints, and interaction signals, making them increasingly difficult to handle.

Another major challenge is IP blocking and rate limiting. When a scraper sends too many requests from a single IP or exhibits non-human behavior, websites may restrict or completely block access. These blocks can be temporary or permanent and often include soft bans that serve misleading or incomplete data.

Website structure changes also pose a significant issue. HTML layouts, APIs, or page elements may change without notice, breaking existing scraping logic. Additionally, dynamic content loaded via JavaScript requires more advanced tools like headless browsers to render pages correctly.

Finally, maintaining data accuracy and consistency is challenging due to incomplete responses, security management interference, or inconsistent content delivery based on geolocation or session behavior.

Solutions / Methods

- Use Rotating Proxies:Distribute requests across multiple IP addresses to avoid detection and handle rate limits. Residential or mobile proxies are often more reliable than datacenter IPs for maintaining access.

- Leverage Headless Browsers & Automation Tools:Tools like Puppeteer or Playwright simulate real user interactions, enabling scraping of JavaScript-heavy websites and reducing detection through realistic behavior patterns.

- Integrate CAPTCHA Solving Services:Modern security management systems rely heavily on CAPTCHA challenges. Using automated captcha solving services such as CapSolver helps handle these barriers efficiently, enabling uninterrupted data extraction even on protected websites.

Best Practice / Tips

- Implement request throttling and randomized delays to mimic human browsing behavior.

- Maintain session consistency (cookies, headers, fingerprint) to reduce detection risk.

- Continuously monitor scraping performance and adapt to structural or security changes.

- Combine multiple techniques (proxy + browser + captcha solving) for higher success rates.

👉 Related:

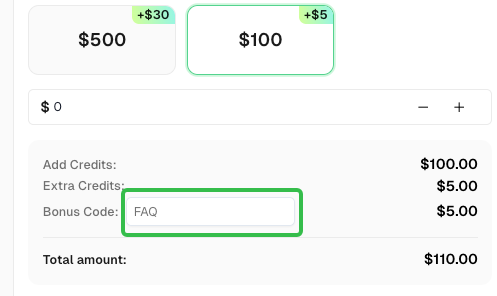

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.

CapSolver FAQ — capsolver.com