What is Real Estate Data Scraping?

Answer

Real estate data scraping is the automated process of collecting property-related information from websites, listings, and public records. It extracts structured data such as prices, locations, availability, and market trends to support analysis and decision-making in real estate investing and research.

Detailed Explanation

Real estate data scraping is a form of web scraping where automated tools extract information from property listing platforms, brokerage sites, and housing marketplaces. Instead of manually reviewing listings, scripts or bots gather data at scale and convert unstructured web content into structured datasets.

This process typically targets publicly available property information such as listing titles, addresses, pricing history, rental rates, square footage, amenities, and neighborhood insights. According to industry usage, this type of data is essential for market intelligence, portfolio management, and competitive analysis in real estate markets. The main challenge is that real estate websites frequently update their layouts and use security protections, making manual extraction inefficient and inconsistent at scale.

Solutions / Methods

- Direct HTML parsing: Using scraping tools or scripts to extract structured fields from listing pages and normalize them into databases or spreadsheets for analysis.

- API-based data extraction: When available, official or third-party APIs provide structured access to property data with higher stability and fewer blocking issues.

- Automated scraping with security challenge handling: Modern scraping workflows use headless browsers, proxies, and fingerprint management to handle dynamic pages and detection systems. For CAPTCHA-protected pages, automated captcha-solving services such as CapSolver can be integrated to maintain uninterrupted data collection pipelines.

Best Practice / Tips

To ensure reliable real estate data collection, it is important to respect website terms of service, implement rate limiting, and regularly validate data accuracy. Using structured pipelines with error handling and deduplication improves data quality. Combining multiple sources also helps reduce bias and improves market coverage.

👉 Related:

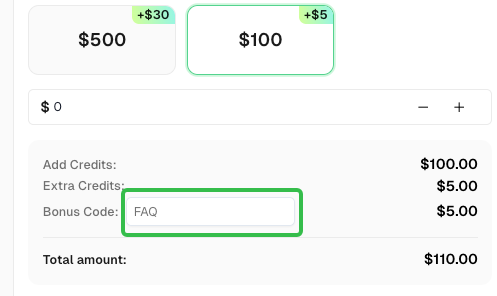

Use code

FAQwhen signing up at CapSolverto receive an additional 5% bonus on your recharge.

CapSolver FAQ — capsolver.com