How Web Scraping Works Explained Step by Step

Answer

Web scraping works by sending automated HTTP requests to a website, retrieving its HTML content, and then parsing that content to extract specific data points. The extracted information is structured into formats like JSON or CSV for storage, analysis, or automation workflows.

Detailed Explanation

Web scraping is essentially the automated version of how a browser loads a webpage. When a user visits a site, the browser sends an HTTP request to the server, receives HTML, and renders it visually. A scraper replicates the first two steps but instead of rendering the page, it focuses on extracting raw data from the HTML structure.

The process begins with sending a request to a target URL. The server responds with HTML, JavaScript references, and sometimes JSON embedded in the page. For static websites, this HTML already contains most of the data. For dynamic websites, additional tools such as headless browsers may be required to execute JavaScript and render the final DOM before extraction. Once the page is loaded, the scraper analyzes the DOM tree and locates relevant elements using selectors such as CSS paths or XPath expressions.

After identifying the required elements, the scraper extracts text, attributes, or structured values such as prices, product names, or metadata. Finally, the cleaned data is normalized and stored in structured formats like databases, spreadsheets, or APIs for further use. This entire pipeline can run at scale to collect large datasets from multiple web sources.

Solutions / Methods

- HTTP Request Fetching: Use libraries like requests or axios to send GET/POST requests and retrieve raw HTML from target pages efficiently.

- HTML Parsing & DOM Extraction: Use parsers such as BeautifulSoup or Cheerio to navigate the DOM and extract targeted elements using selectors.

- Dynamic Rendering with Automation Tools: For JavaScript-heavy websites, headless browsers simulate real user behavior. In more advanced security management environments, solutions like CapSolver can assist in handling CAPTCHA challenges during automated data extraction workflows.

Best Practice / Tips

Effective web scraping requires respecting website structure and minimizing unnecessary requests. Always optimize selectors to avoid fragile scraping logic, implement retry mechanisms for network failures, and use throttling to reduce server load. For large-scale scraping systems, combining structured parsing with resilient automation frameworks ensures better stability and scalability.

👉 Related:

- Web Scraping Legal

- Web Scraping with Curl Cffi

- Web Scraping Challenges and How to Solve

- Web Scraping Without Getting Blocked

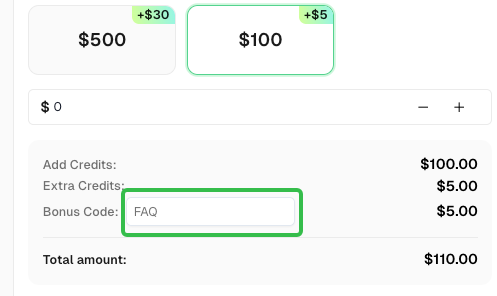

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.

CapSolver FAQ — capsolver.com