What Is Quick Commerce Data Scraping?

Answer

Quick commerce data scraping refers to the automated extraction of real-time product, pricing, inventory, and delivery data from instant delivery platforms. It enables businesses to monitor dynamic market conditions, analyze demand trends, and optimize pricing or logistics strategies using continuously updated datasets.

Detailed Explanation

Quick commerce (Q-commerce) focuses on ultra-fast delivery-often within 30 to 60 minutes-making it one of the most dynamic segments in digital commerce. Because of its speed and hyperlocal nature, data such as product availability, prices, and delivery times change frequently, sometimes within minutes. Quick commerce data scraping is designed to capture this rapidly changing information using automated tools.

This process typically involves extracting structured data such as product listings, SKUs, pricing variations, stock levels, promotions, delivery ETAs, and customer reviews from web or app-based platforms. Unlike traditional e-commerce scraping, Q-commerce scraping must handle challenges like geo-specific content, JavaScript-heavy interfaces, and frequent UI or API updates.

The collected data is then used for multiple business applications, including competitive pricing analysis, demand forecasting, inventory optimization, and market intelligence. Since quick commerce platforms operate in real time, scraping systems often run at high frequency to ensure data freshness and accuracy, enabling faster and more informed decision-making.

Solutions / Methods

- Headless Browser Automation:Use tools like Puppeteer or Playwright to render JavaScript-heavy pages and simulate real user interactions, allowing accurate extraction of dynamic product and pricing data.

- Proxy Rotation & Geo-targeting:Implement rotating proxies and location-based IP simulation to collect region-specific data such as localized pricing, stock availability, and delivery coverage.

- Automated CAPTCHA Solving (e.g., CapSolver):Quick commerce platforms often deploy security systems and CAPTCHA challenges. Using automated captcha solving services such as CapSolver helps handle these protections efficiently, ensuring uninterrupted data collection at scale.

Best Practice / Tips

- Design scraping pipelines to handle frequent site structure changes and API updates.

- Normalize product data (names, sizes, variants) to ensure accurate comparison across platforms.

- Balance scraping frequency with rate limits to avoid detection or blocking.

- Always collect only publicly accessible data and comply with applicable legal and ethical guidelines.

👉 Related:

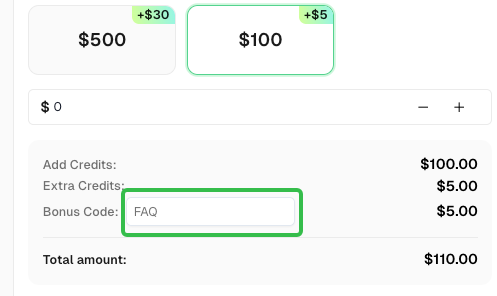

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.

CapSolver FAQ — capsolver.com