Is Web Scraping Legal and What Are the Key Rules to Follow?

Answer

Web scraping is generally legal when collecting publicly accessible data, but legality depends on how the data is accessed, what type of data is collected, and how it is used. Violating terms of service, scraping personal or copyrighted data, or handling technical protections can lead to legal risks.

Detailed Explanation

Web scraping exists in a legal gray area because there is no single global law governing it. Instead, legality is determined by multiple factors, including jurisdiction, data type, and access method. In general, collecting publicly available information-such as product listings or publicly indexed pages-is often permitted, especially when no login or authentication is required.

However, “publicly accessible” does not mean “free to use without restrictions.” Many websites define rules in their terms of service, which may prohibit automated access. Additionally, scraping personal data can trigger privacy regulations like GDPR, while extracting copyrighted material for redistribution may violate intellectual property laws.

Technical behavior also matters. Aggressive scraping that overloads servers, ignores robots.txt, or handlees protections such as login walls or CAPTCHA systems may be considered unauthorized access or abusive behavior. In some jurisdictions, this can lead to legal claims or enforcement actions.

Ultimately, web scraping legality is context-dependent. It is influenced by what data you collect, how you collect it, and what you do with it afterward.

Solutions / Methods

- Focus on publicly accessible and non-sensitive data:Only scrape data that is available without authentication and avoid collecting personally identifiable information or restricted content. This significantly reduces legal exposure.

- Respect website policies and technical boundaries:Review terms of service, follow robots.txt guidelines, and apply rate limiting to avoid disrupting servers or triggering security defenses.

- Use compliant automation and CAPTCHA handling tools:When encountering security management systems like reCAPTCHA or Cloudflare challenges, solutions like CapSolver can help automate interactions efficiently. These tools should be used responsibly, ensuring compliance with legal and ethical standards rather than handling protections for misuse.

Best Practice / Tips

- Prefer official APIs when available, as they provide authorized and structured access to data.

- Document your data sources and usage purposes for compliance and auditing.

- Apply conservative request rates and rotate infrastructure to avoid detection and blocking.

- Consult legal professionals when building large-scale or commercial scraping systems.

👉 Related:

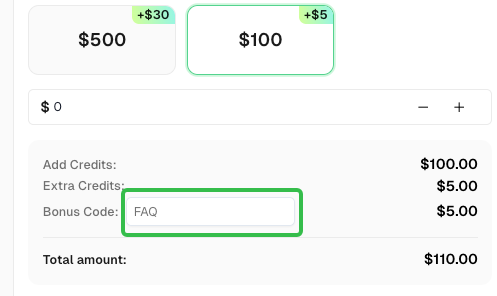

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.

CapSolver FAQ — capsolver.com