What Are the Common Uses of Web Scraping?

Answer

Web scraping is commonly used to automatically collect and structure online data for applications such as market research, price comparison, lead generation, and sentiment analysis. Businesses rely on it to monitor competitors, detect trends, and support faster, data-driven decision-making across industries like e-commerce, finance, and healthcare.

Detailed Explanation

Web scraping enables automated extraction of publicly available information from websites, transforming unstructured web content into structured datasets that can be analyzed at scale. Instead of manually reviewing pages, organizations deploy scraping systems to continuously gather data from sources such as marketplaces, social platforms, directories, and review sites.

One of the most widespread applications is market research, where scraped data helps identify emerging product trends, customer preferences, and competitor positioning. By analyzing large datasets from e-commerce platforms and forums, companies can detect shifts in demand much earlier than traditional research methods.

Another major use case is competitive pricing intelligence. Businesses extract product prices, discounts, and availability data to optimize their own pricing strategies in real time. This is especially critical in highly competitive online retail environments where price fluctuations happen frequently.

Additionally, web scraping is widely applied in sentiment analysis, where reviews, social media posts, and forum discussions are collected and analyzed to evaluate public perception of brands or products. This helps organizations respond quickly to reputation risks and evolving customer expectations.

Solutions / Methods

- Market research automation: Collecting large-scale data from e-commerce platforms, forums, and marketplaces to identify trends and customer behavior patterns.

- Lead generation systems: Extracting business contact details from directories and public listings to build structured prospect databases for marketing and sales teams.

- Captcha-protected data collection: When websites implement security management systems such as Cloudflare or reCAPTCHA, automated captcha solving services such as CapSolver can help maintain uninterrupted scraping workflows and improve data extraction success rates.

Best Practice / Tips

- Respect website terms and robots directives to avoid legal or ethical issues.

- Use rate limiting and proxy rotation to reduce detection risks during large-scale scraping.

- Combine scraped data with analytics or AI models to extract actionable insights rather than raw datasets.

👉 Related:

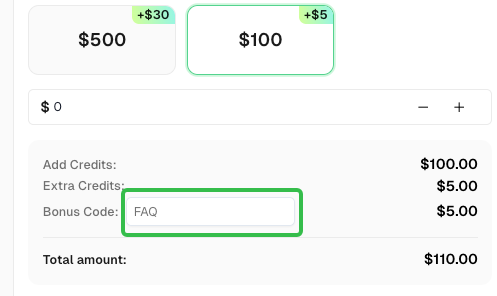

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.

CapSolver FAQ — capsolver.com