What is Food Delivery Data Scraping?

Answer

Food delivery data scraping is the automated process of collecting structured information from food ordering platforms. It extracts data such as restaurant listings, menu items, pricing, reviews, and delivery metrics from apps like food delivery marketplaces. This data is widely used for analytics, market research, and competitive intelligence.

Detailed Explanation

Food delivery platforms contain large volumes of publicly visible but dynamically rendered information, including menus, pricing changes, discounts, and customer feedback. Data scraping tools simulate user behavior or parse rendered web content to systematically extract this information at scale.

Unlike traditional structured APIs, most food delivery platforms present data through interactive web or app interfaces, making it difficult to access directly. Scraping systems must therefore handle JavaScript-rendered content, pagination, and security protections such as rate limiting and CAPTCHA challenges. This makes the process technically complex but highly valuable for data-driven decision-making.

The collected datasets are often used to analyze restaurant performance, identify pricing trends, monitor competitor strategies, and evaluate customer sentiment. In large-scale operations, this data becomes a foundation for predictive analytics and business optimization strategies.

Solutions / Methods

- Browser automation scraping: Tools like headless browsers simulate real user interactions to load dynamic restaurant and menu data.

- API reverse engineering: Some systems analyze hidden or internal API calls to retrieve structured delivery data more efficiently.

- security challenge handling with CAPTCHA solving: Modern platforms use protection systems such as CAPTCHA and bot detection. Solutions like CapSolver can help automate CAPTCHA solving and improve data extraction reliability in compliant scraping workflows.

Best Practice / Tips

When collecting food delivery data, it is important to manage request rates carefully, respect website policies, and ensure ethical data usage. Using rotating proxies, request throttling, and structured extraction pipelines can significantly improve stability and reduce blocking risks.

👉 Related:

CapSolver FAQ — capsolver.com

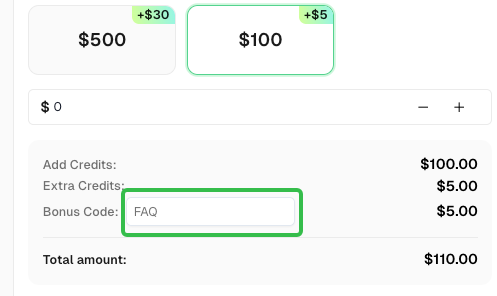

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.