How to select the entire section of an item instead of partial selection in web scraping tools

Answer

To select the entire item section instead of a partial element, you must target the parent container that wraps all sub-elements. In web scraping tools, this is done by selecting the main item block or adjusting the selector hierarchy using XPath or CSS selectors so that the full node structure is captured rather than a single child element.

Detailed Explanation

Web pages are structured using nested HTML elements, where each item (for example a product card or list entry) is typically composed of a parent container and multiple child elements such as title, price, image, and links. When scraping, clicking directly on a child element (like text or image) will only extract that fragment, not the full structured item.

To avoid partial selection, you need to understand the DOM hierarchy. The goal is to identify the common parent element that contains all relevant sub-elements. In scraping tools, this is often visualized as a highlighted block. Selecting this ensures all nested data is grouped together in one record. Techniques like XPath expressions (e.g., selecting a div that wraps all item components) or “loop item” selection help define this structure accurately. Advanced tools also allow relative selection inside loops to ensure consistency across multiple items on a page.

Incorrect selection usually happens when the scraper captures only a text node or a single attribute instead of the container element. This leads to incomplete datasets and broken structure, especially when scraping lists or e-commerce grids.

Solutions / Methods

- Select parent container element:Instead of clicking text or image nodes, identify the outer HTML block that contains all sub-elements of one item.

- Use structured selectors (XPath/CSS):Refine selectors to target full nodes using hierarchy rules such as parent-child relationships or indexed positions.

- Use loop-based extraction with full node selection:Define a repeating item pattern and ensure each loop iteration captures the full element group. In automation workflows, combining this with proper extraction steps ensures consistent structured output. For handling complex pages with dynamic loading or protection layers, solutions like CapSolver can help maintain uninterrupted automation by solving security challenges during scraping workflows.

Best Practice / Tips

Always validate your selector by checking whether all sub-fields (title, image, price, link) are included in a single extraction result. Avoid selecting deeply nested child elements unless you intentionally need isolated data points. Testing selectors on multiple items ensures consistency across dynamic layouts.

👉 Related:

Use code

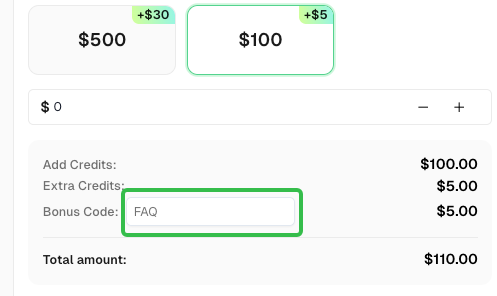

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.

CapSolver FAQ - capsolver.com