What is Python Requests Library?

Answer

The Python Requests library is a third-party HTTP client used to send web requests such as GET, POST, PUT, and DELETE in a simple and readable way. It abstracts low-level networking complexity, making it easier to interact with APIs, retrieve web data, and build automation or scraping workflows in Python.

Detailed Explanation

The Requests library acts as a high-level wrapper over HTTP communication, allowing developers to interact with web servers without manually handling sockets or query encoding. Instead of dealing with complex networking code, users can call intuitive functions like requests.get() or requests.post().

Under the hood, it manages connection pooling, cookies, SSL verification, headers, and response parsing. This makes it especially useful for REST API integration, where structured data such as JSON is exchanged between client and server. It also simplifies error handling by providing easy access to status codes and response content.

Because many modern websites use security management systems and dynamic protection layers, HTTP requests may sometimes be blocked or challenged. In such scenarios, developers often combine Requests with advanced proxy management or automated captcha-solving solutions such as CapSolver to maintain reliable access during large-scale scraping or automation tasks.

Solutions / Methods

- Basic HTTP Requests: Use built-in methods like GET and POST to fetch or send data to web servers, ideal for APIs and simple scraping tasks.

- Session & Header Management: Use persistent sessions, custom headers, and authentication tokens to simulate real browser behavior and improve request reliability.

- Handling security protections: When requests are blocked by CAPTCHA or security management systems, integrate automated solving solutions such as CapSolver to handle verification challenges and maintain uninterrupted data collection workflows.

Best Practice / Tips

Always set appropriate timeouts to avoid hanging requests, rotate headers like User-Agent for better compatibility, and reuse sessions for performance optimization. For large-scale scraping, combine Requests with proxies and retry strategies to reduce failure rates and improve stability.

👉 Related:

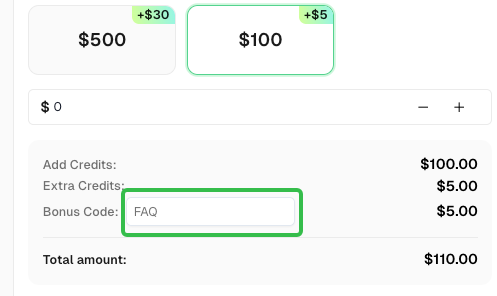

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.

CapSolver FAQ — capsolver.com