Scaling Data Collection for LLM Training: Solving CAPTCHAs at Scale

Lucas Mitchell

Automation Engineer

TL;Dr:

- Data Quality is King: High-quality data collection is the foundation of effective LLM training.

- CAPTCHA Barriers: Modern websites use sophisticated challenges that stall automated data extraction.

- Scalability Matters: Manual intervention is impossible when collecting billions of tokens for AI models.

- CapSolver Solution: Automated tools provide the speed and reliability needed for enterprise-level data gathering.

- Cost Efficiency: Outsourcing CAPTCHA solving reduces infrastructure overhead and accelerates development cycles.

Introduction

Building a competitive Large Language Model (LLM) requires access to massive, diverse, and high-quality datasets. Most of this information resides on the open web, protected by various security layers. Data collection at this magnitude presents unique technical hurdles that traditional scraping methods cannot overcome. Developers often find their automated systems blocked by complex verification puzzles. These barriers exist to protect site integrity but also hinder legitimate researchers and AI developers. This article explores how to scale data collection for LLM training by addressing the persistent challenge of solving captcha at scale. We will examine the intersection of web automation and machine learning infrastructure. Readers will learn how to integrate CapSolver to maintain a steady flow of training data without manual bottlenecks.

The Role of Web Data in LLM Training

Large Language Models thrive on the breadth of information available across the internet. From scientific journals to forum discussions, every piece of text contributes to the model's reasoning capabilities. However, the process of gathering this data is becoming increasingly difficult. Many high-value sources implement strict rate limits and verification checks. These measures are designed to distinguish between human users and automated scripts. For AI teams, these checks represent a significant friction point in their data pipeline.

The volume of data required for modern models is staggering. For instance, models like GPT-4 are trained on trillions of tokens. Collecting this amount of information requires a highly distributed and resilient scraping infrastructure. When a scraper encounters a verification puzzle, the entire process can grind to a halt. This delay is not just a minor inconvenience; it can lead to stale datasets and increased operational costs. Ensuring a continuous stream of data collection is essential for maintaining the competitive edge of an AI product.

Common Challenges in Large-Scale Data Extraction

Scaling your data collection efforts involves more than just adding more servers. You must navigate a landscape of evolving security protocols. Most websites now use behavioral analysis to detect automation. When a script behaves too predictably, it triggers a captcha. These challenges have evolved from simple text recognition to complex image classification and puzzle-solving tasks.

| Challenge Category | Impact on Data Collection | Mitigation Strategy |

|---|---|---|

| IP Rate Limiting | Blocks requests from specific data centers. | Use of residential proxies and rotation. |

| Dynamic Content | Content only loads after JavaScript execution. | Headless browsers like Playwright or Puppeteer. |

| Verification Puzzles | Stops automated flows until solved. | Integration of automated CAPTCHA solvers. |

| Fingerprinting | Identifies scrapers based on browser headers. | Header randomization and stealth plugins. |

Many developers attempt to build their own solvers using basic machine learning models. While this might work for simple puzzles, it fails against modern, AI-driven security systems. Maintaining an in-house solver requires constant updates and a dedicated team of researchers. This takes focus away from the core task of LLM training and refinement.

Why Solving CAPTCHAs at Scale is Critical

In the context of LLM development, time is a critical resource. Every hour spent fixing a broken scraper is an hour lost in the training cycle. Automated data collection must be robust enough to handle thousands of requests per second. If your system cannot handle verification challenges automatically, your scaling potential is capped by human intervention.

Modern AI agents and scrapers need a reliable way to navigate these hurdles. This is where specialized services become indispensable. By using an API-based approach, developers can offload the complexity of solving captcha. This allows the scraping logic to remain simple and focused on data extraction. For those interested in the technical implementation, understanding why web automation keeps failing on captcha is the first step toward building a more resilient system.

Integrating CapSolver into Your AI Data Pipeline

CapSolver provides a robust API that integrates directly into existing automation frameworks. Whether you are using Python, Node.js, or Go, the integration process is straightforward. The service supports a wide range of challenges, including reCAPTCHA, and specialized enterprise versions. This versatility is vital for teams performing data collection from diverse global sources.

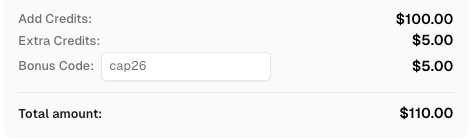

Use code

CAP26when signing up at CapSolver to receive bonus credits!

When a scraper encounters a challenge, it sends the site key and URL to the CapSolver API. The service then returns the solution token, which the scraper submits to the website. This entire process happens in seconds, ensuring that the data flow remains uninterrupted. This level of automation is what enables the creation of high-quality datasets for machine learning at an industrial scale.

Comparison Summary: In-House vs. CapSolver

Choosing between building a custom solution and using a professional service is a common dilemma for AI startups. The following table summarizes the key differences.

| Feature | In-House Development | CapSolver API |

|---|---|---|

| Initial Cost | High (Engineering hours) | Low (Pay-per-use) |

| Maintenance | Constant updates required | Managed by provider |

| Success Rate | Variable and often low | High (99.9% uptime) |

| Scalability | Limited by local hardware | Virtually unlimited |

| Focus | Distracts from AI research | Enables core development |

For most organizations, the total cost of ownership for an in-house solver is significantly higher. The hidden costs of maintenance and lost data often outweigh the subscription fees of a specialized service.

Technical Implementation for AI Agents

Modern AI agents, such as those built on LangChain or AutoGPT, often need to browse the web to find real-time information. These agents are particularly susceptible to being blocked because their browsing patterns are distinct. Integrating a solver into an agent's toolset allows it to complete tasks that would otherwise be impossible.

For example, an agent tasked with gathering the latest research papers might encounter a verification wall on a digital library. With an automated solver, the agent can handle the solving captcha and continue its search. This capability is essential for creating truly autonomous systems. Developers can explore more about LLMs enterprise CAPTCHA AI to see how these technologies complement each other in professional environments.

Data Quality and Filtering After Collection

Solving the captcha is only the first part of the journey. Once the data is collected, it must be cleaned and filtered. Raw web data often contains noise, such as advertisements, navigation menus, and duplicate content. For LLM training, this noise can degrade the model's performance.

AI teams use various techniques to ensure data quality. This includes using smaller models to score the relevance of the text or applying heuristic filters to remove low-quality snippets. The goal is to create a dataset that is both massive and clean. The synergy between efficient data collection and rigorous filtering is what produces top-tier AI models. You can find more practical advice on this in the guide on AI & LLM practice.

Ethical Considerations in Automated Data Collection

While the technical ability to collect data is vast, it must be balanced with ethical considerations. Respecting robots.txt files and not overloading small websites are standard best practices. AI developers should strive to be good citizens of the web. This includes providing clear user-agent strings and adhering to data privacy regulations like GDPR.

Using automated tools for solving captcha should be done responsibly. The objective is to facilitate the creation of beneficial AI technologies while minimizing the impact on the target websites. Many researchers argue that the public benefit of advanced LLM models justifies the large-scale collection of publicly available data. This debate continues to evolve as the technology matures.

Future Trends in AI Data Gathering

The landscape of data collection is shifting toward more intelligent and adaptive systems. We are seeing the rise of multi-modal data collection, where models are trained on a mix of text, images, and video. This increases the complexity of the scraping task, as different types of content require different handling strategies.

Furthermore, as websites become better at detecting AI, the tools used to collect data must also become more sophisticated. The "cat and mouse" game between security systems and automation tools will likely continue. Services that stay ahead of these trends will remain essential for the AI industry. For a deeper look into the future, consider reading about the AI-LLM future solution and how it impacts the broader ecosystem.

To maintain a competitive edge, organizations must focus on optimizing AI infrastructure at scale. This includes ensuring that every component of the data pipeline, from proxy management to solving captcha, is as efficient as possible. By leveraging specialized tools, teams can build large scale web data repositories that serve as the foundation for future breakthroughs. As noted in recent discussions on scaling storage for AI training, the ability to handle massive data transfers is just as important as the compute power itself.

Conclusion

Scaling data collection for LLM training is a foundational challenge for the next generation of AI. By automating the process of solving captcha at scale, developers can ensure their models have access to the vast wealth of information on the internet. CapSolver offers a reliable, cost-effective, and scalable solution that integrates into any modern data pipeline. This allows AI teams to focus on what they do best: building intelligent systems that change the world. Don't let verification puzzles slow down your innovation. Start using CapSolver today to streamline your data acquisition and accelerate your model training.

FAQ

1. Why is automated solving captcha necessary for LLM training?

LLM training requires trillions of data points. Manual intervention for every verification puzzle would make it impossible to collect data at the required speed and scale.

2. Does using a solver affect the quality of the collected data?

No, the solver only handles the verification hurdle. The quality of the data depends on your scraping logic and the subsequent filtering processes you apply to the raw text.

3. Is it difficult to integrate CapSolver into an existing Python scraper?

The integration is very simple. CapSolver provides a well-documented API and SDKs that allow you to add puzzle-solving capabilities with just a few lines of code.

4. Can CapSolver handle the latest versions of reCAPTCHA?

Yes, the service is constantly updated to support the newest and most complex versions of all major verification systems used by high-traffic websites.

5. What are the main benefits of using an API over building a custom solver?

The main benefits include higher success rates, zero maintenance overhead, instant scalability, and significantly lower total costs compared to hiring a dedicated engineering team.

More

AIApr 28, 2026

AI Agents in Web Scraping & Competitive Intelligence Guide

Discover how AI agents transform web scraping and competitive intelligence. Learn about automated data collection, anti-bot challenges, and CAPTCHA solutions for scalable workflows.

AIApr 24, 2026

AI Agent vs Chatbot: Key Differences in Automation Capabilities

Discover the key differences between AI agent vs chatbot. Learn how agentic AI outperforms traditional AI in automation, decision-making, and complex workflows.