AI Agents in SEO: From Keyword Research to Automated Data Collection

Nikolai Smirnov

Software Development Lead

TL;DR

- AI agents in SEO are autonomous systems that handle keyword research, content audits, rank tracking, and data collection with minimal human input.

- The ai agents industry is growing at a 49.6% CAGR, projected to reach $182.97 billion by 2033, making ai-powered seo agents a mainstream infrastructure choice.

- These agents work through a perceive → reason → act loop, connecting to search APIs, crawlers, and analytics platforms.

- Automated data collection pipelines frequently encounter CAPTCHA challenges on protected websites, which can stall the entire workflow.

- CapSolver's AI-powered CAPTCHA solving API integrates directly into these pipelines, keeping data collection running without manual interruption.

Introduction

SEO has always been a data-intensive discipline. Ranking well requires continuous keyword monitoring, competitor analysis, content auditing, and backlink tracking — tasks that traditionally consumed dozens of hours per week. AI agents in SEO change that equation. These autonomous systems can plan, execute, and adapt across complex workflows without waiting for human instruction at every step. This article explains what ai-powered seo agents actually are, how they work under the hood, where they fit into real SEO workflows, and what technical obstacles — including CAPTCHA walls — teams need to account for when deploying them at scale.

What Are AI Agents in SEO?

An AI agent is a software system that perceives its environment, reasons about a goal, and takes actions to achieve it — then evaluates the result and adjusts. Unlike a simple automation script that follows a fixed sequence, an agent can handle branching decisions, retry failed steps, and call external tools dynamically.

In the context of SEO, an ai-powered seo agent might be given a goal like: "Identify keyword gaps between our blog and the top three competitors for the topic 'project management software'." The agent will then:

- Query a keyword research API for target terms

- Crawl competitor pages to extract their ranking keywords

- Cross-reference both datasets to surface gaps

- Generate a prioritized list of content opportunities

- Optionally draft a content brief and push it to a CMS

No human needs to manage each step. The agent handles tool selection, error recovery, and output formatting on its own.

This is fundamentally different from traditional SEO tools, which surface data but require a human to interpret and act. AI agents in SEO close that loop.

The AI Agents Industry: Scale and Context

Before diving into SEO-specific applications, it helps to understand the broader landscape. The global ai agents industry was valued at $7.63 billion in 2025 and is projected to reach $182.97 billion by 2033, growing at a 49.6% CAGR. This growth is driven by adoption across marketing, finance, healthcare, and logistics — with SEO automation representing one of the fastest-moving verticals.

BCG's analysis of AI agents identifies autonomous planning and multi-tool orchestration as the two capabilities that separate true agents from simple chatbots. Both are directly relevant to SEO workflows, where tasks span multiple data sources and require conditional logic.

How AI-Powered SEO Agents Work

The Core Loop

Every ai-powered seo agent operates on a three-phase cycle:

| Phase | What Happens |

|---|---|

| Perceive | The agent ingests inputs: search queries, ranking data, site crawl results, competitor URLs |

| Reason | An LLM or planning module decides which tools to call and in what order |

| Act | The agent executes: API calls, web requests, content generation, database writes |

After acting, the agent evaluates the output against its goal and either continues, retries, or escalates to a human reviewer.

Tool Connections

AI agents in SEO typically connect to:

- Search APIs (Google Search Console, SEMrush, Ahrefs, DataForSEO) for keyword and ranking data

- Web crawlers for on-page analysis and competitor content extraction

- Content platforms (WordPress, Contentful) for publishing or updating pages

- Analytics tools (GA4, Looker Studio) for performance feedback

- LLMs (GPT-4, Claude, Gemini) for reasoning, summarization, and content generation

The agent framework — tools like LangChain, AutoGen, or CrewAI — handles the orchestration layer, deciding when to call which tool and how to pass outputs between steps. For a deeper look at the frameworks powering these systems, see top AI agent frameworks in 2026.

The Act Phase in Practice

The "Act" phase is where the agent's reasoning translates into real HTTP requests, database writes, and file outputs. A concrete example: an ai-powered seo agent tasked with rank tracking will construct a search query string, send it to a SERP API endpoint, parse the JSON response to extract position data, compare that data against a stored baseline, and write a delta record to a database — all within a single execution cycle. If the API returns an error or a CAPTCHA challenge, the agent's error-handling logic decides whether to retry, switch to a fallback data source, or call a CAPTCHA resolution service before retrying. This conditional branching is what separates agents from simple cron jobs.

Core Use Cases for AI Agents in SEO

1. Keyword Research at Scale

Manual keyword research has a ceiling. A human analyst can process hundreds of keywords per session; an ai-powered seo agent can process tens of thousands. The agent queries multiple keyword APIs in parallel, clusters results by semantic similarity, scores each cluster by search volume and difficulty, and outputs a prioritized roadmap.

Critically, the agent can also monitor keyword trends continuously — flagging when a previously low-volume term starts gaining traction, without waiting for a scheduled weekly review. Search Engine Land's practical walkthrough of AI agent SEO workflows illustrates how this continuous monitoring loop works in production environments, including how agents handle data freshness and API rate limits.

2. Automated Competitor Analysis

AI agents in SEO can crawl competitor sites on a defined schedule, extract heading structures, internal link patterns, and content depth, then compare those signals against your own pages. The output is a structured gap analysis: topics your competitors cover that you don't, pages where their content is significantly longer or better structured, and backlink sources you haven't tapped.

3. Technical SEO Auditing

Site crawls, broken link detection, Core Web Vitals monitoring, and schema validation are all tasks that ai-powered seo agents handle well. The agent runs the crawl, identifies issues, prioritizes them by estimated ranking impact, and can even generate fix recommendations or push tickets to a development backlog.

4. Content Optimization

Given a target keyword and an existing page, an AI agent can analyze the top-ranking pages, identify missing subtopics, suggest structural improvements, and rewrite specific sections — all without a human writing a single prompt beyond the initial goal.

5. Automated Data Collection and SERP Monitoring

Rank tracking, SERP feature monitoring, and competitor price or content changes all require continuous data collection. This is where ai-powered seo agents interact most directly with the live web — and where they encounter the most friction.

The Data Collection Challenge: CAPTCHA and Bot Protection

Automated data collection is the backbone of most SEO agent workflows. Agents need to fetch live SERP data, crawl competitor pages, and pull structured information from protected sources. The problem is that most high-value data sources deploy bot protection.

When an ai-powered seo agent sends repeated requests to a search engine results page, a competitor's pricing page, or a review aggregator, it will eventually trigger a CAPTCHA challenge. Common types include:

- reCAPTCHA v2/v3 — Google's challenge system, ranging from checkbox interactions to invisible behavioral scoring

- Cloudflare Turnstile — a newer, privacy-focused challenge that evaluates browser signals

When a CAPTCHA fires, the agent's data collection pipeline stalls. If the agent can't resolve the challenge, it either fails silently or returns incomplete data — both of which corrupt downstream SEO analysis.

This is a structural problem for any team running ai agents in SEO at scale. The solution isn't to avoid protected sources; it's to build CAPTCHA resolution into the pipeline as a standard component.

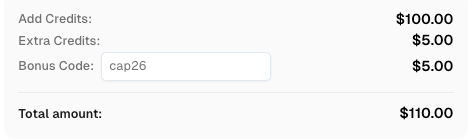

Redeem Your CapSolver Bonus Code

Boost your automation budget instantly!

Use bonus code CAP26 when topping up your CapSolver account to get an extra 5% bonus on every recharge — with no limits.

Redeem it now in your CapSolver Dashboard

Integrating CapSolver into SEO Agent Pipelines

CapSolver is an AI-powered CAPTCHA solving service that resolves reCAPTCHA, Cloudflare Turnstile, GeeTest, and other challenge types via a REST API. It uses machine learning models — not human workers — to return valid tokens within 1–5 seconds.

For teams running ai-powered seo agents, CapSolver functions as a tool the agent can call whenever it encounters a CAPTCHA wall. The integration pattern is straightforward: when the agent's HTTP client receives a CAPTCHA challenge response, it passes the relevant parameters (site key, page URL, challenge type) to the CapSolver API, receives a solved token, and injects that token into the next request.

This keeps the data collection pipeline running without human intervention, which is exactly what autonomous SEO agents require.

CapSolver supports all major CAPTCHA types encountered in SEO automation workflows. You can review the full list of supported solver types in the CapSolver API documentation.

For teams building web scraping infrastructure alongside their SEO agents, the top web scraping tools guide for 2026 covers how to combine crawlers, proxies, and CAPTCHA solving into a reliable stack.

Note on compliance: Automated data collection should always respect a site's

robots.txtdirectives and applicable terms of service. CapSolver is designed for legitimate automation use cases — testing, research, and data collection within legal and ethical boundaries.

Comparison: Traditional SEO Workflows vs. AI Agent Workflows

| Dimension | Traditional SEO Workflow | AI Agent SEO Workflow |

|---|---|---|

| Keyword research | Manual tool queries, analyst review | Automated multi-source aggregation and clustering |

| Competitor analysis | Periodic manual audits | Continuous automated monitoring |

| Content optimization | Human-written briefs and edits | Agent-generated recommendations, optional auto-drafting |

| Rank tracking | Scheduled tool reports | Real-time agent monitoring with alerting |

| Data collection | Manual exports, limited scale | Automated crawling with CAPTCHA resolution |

| Human involvement | High — every step requires input | Low — humans review outputs and set goals |

| Scalability | Limited by analyst bandwidth | Scales with compute, not headcount |

What AI Agents in SEO Still Can't Do Well

Honest assessment matters here. AI agents in SEO are strong at pattern recognition, data aggregation, and repetitive execution. They are weaker at:

- Strategic judgment — deciding which keywords align with business goals requires human context that the ai agents industry has not yet encoded into general-purpose models

- Brand voice — generated content often needs editorial review before publishing; agents optimize for structure and coverage, not tone

- Novel situations — agents struggle when they encounter data structures, site architectures, or CAPTCHA variants they haven't been trained on

- Relationship-based SEO — link building, PR outreach, and partnership development remain human-driven activities where trust and communication matter more than data throughput

- Interpreting ambiguous signals — when ranking changes have multiple plausible causes (algorithm update, competitor action, technical regression), agents surface the data but rarely diagnose the root cause reliably

The most effective deployments treat ai-powered seo agents as force multipliers for human strategists, not replacements. Agents handle the data layer; humans handle the judgment layer. This division of labor is consistent with how the broader ai agents industry is maturing — autonomous execution for well-defined tasks, human oversight for decisions with strategic consequences.

For a broader look at how agentic AI systems are being applied across industries, the what is agentic AI and how it works overview provides useful context.

Getting Started: A Practical Framework

If you're evaluating ai-powered seo agents for your team, a phased approach reduces risk:

Phase 1 — Automate data collection first. Start with rank tracking and competitor monitoring. These are high-frequency, low-judgment tasks where agents deliver immediate time savings.

Phase 2 — Add keyword research automation. Connect to keyword APIs, build clustering logic, and have the agent surface opportunities for human review rather than acting autonomously.

Phase 3 — Introduce content optimization assistance. Use agents to generate briefs and identify gaps, with human writers handling final output.

Phase 4 — Build a full pipeline with CAPTCHA handling. As your data collection expands to protected sources, integrate a CAPTCHA solving layer. CapSolver's API fits into this step as a standard infrastructure component — the same way you'd add a proxy rotation service.

Conclusion

AI agents in SEO represent a genuine shift in how teams approach search optimization — not as a replacement for strategy, but as infrastructure that removes the manual bottlenecks between data and action. The ai agents industry is growing fast, and ai-powered seo agents are moving from experimental tools to standard components in competitive SEO stacks.

The technical challenges are real but solvable. CAPTCHA walls are the most common point of failure in automated data collection pipelines, and integrating a reliable solving layer like CapSolver keeps those pipelines running at the scale that autonomous agents require.

If you're building or evaluating an SEO automation stack, explore CapSolver's API to see how it fits into your data collection workflow.

FAQ

Q: What is the difference between an AI SEO tool and an AI SEO agent?

A: A tool surfaces data and waits for human action. An agent perceives a goal, selects tools, executes tasks, evaluates results, and adapts — all without step-by-step human instruction. The distinction is autonomy and multi-step reasoning.

Q: Do AI agents in SEO require coding knowledge to set up?

A: It depends on the platform. Some ai-powered seo agents come as no-code SaaS products. Others are built on frameworks like LangChain or AutoGen and require Python or JavaScript knowledge. Enterprise deployments typically involve engineering resources for custom integrations.

Q: Why do SEO data collection agents encounter CAPTCHAs?

A: Search engines and competitor sites use bot detection to protect their infrastructure from excessive automated requests. When an agent sends high-frequency requests that match bot traffic patterns, the site responds with a CAPTCHA challenge to verify the requester is human. Without a resolution mechanism, the agent's pipeline stalls.

Q: Is automated SEO data collection legal?

A: It depends on the source and jurisdiction. Many sites permit crawling within the limits defined in their robots.txt file. Scraping personal data or violating explicit terms of service can create legal exposure. Always review the target site's terms and applicable regulations before deploying automated collection at scale.

Q: How do ai-powered seo agents handle ranking volatility?

A: Well-designed agents monitor ranking changes continuously and can be configured to trigger alerts or automated responses — such as flagging a page for content review — when rankings drop beyond a defined threshold. This is one of the clearest advantages over scheduled weekly reports, which may miss rapid fluctuations.

More

AIApr 29, 2026

Real-Time Image Recognition for Web Automation: Solve CAPTCHAs with CapSolver

Explore how real-time image recognition powers web automation, tackling reCAPTCHA, custom CAPTCHAs, and AWS WAF challenges with CapSolver's API and SDKs.

AIApr 28, 2026

AI Agents in Web Scraping & Competitive Intelligence Guide

Discover how AI agents transform web scraping and competitive intelligence. Learn about automated data collection, anti-bot challenges, and CAPTCHA solutions for scalable workflows.