Failed to save tasks due to website restrictions

Answer

This error occurs when a web scraping task cannot be saved because the target website blocks automated access or restricts crawling behavior. It usually happens due to security protections, blocked domains, or invalid scraping workflows that trigger detection systems.

Detailed Explanation

Modern websites increasingly implement security mechanisms designed to prevent automated data extraction. These systems may analyze request patterns, browser fingerprints, cookies, or URL structures to detect non-human behavior. When a scraper attempts to save or execute a task against a restricted domain, the platform may stop the workflow at the configuration stage to avoid violating website policies.

Common triggers include explicitly disallowed domains (such as social platforms), URL parameters containing restricted keywords, or repetitive navigation patterns that resemble bot activity. In many cases, even correct workflows fail if the underlying website dynamically blocks automated tools or returns security challenges instead of expected content.

Solutions / Methods

- Validate target URL structure: Ensure the input URL does not contain restricted domains or embedded parameters that trigger blocking rules. Replace direct navigation with in-page search or keyword-based navigation when needed.

- Adjust workflow and request behavior: Add delays, pagination controls, and proper loop configurations to reduce detection risk. Misconfigured loops or overly aggressive crawling often lead to restriction errors.

- Handle security challenges and verification layers: If CAPTCHA or verification pages appear during task execution, automated captcha solving solutions such as CapSolver can help process challenges like Cloudflare or reCAPTCHA in a controlled and compliant automation workflow.

Best Practice / Tips

To reduce scraping failures, always test workflows on small datasets before scaling. Avoid sending high-frequency requests, and simulate natural browsing behavior where possible. Monitoring site structure changes is also essential because even minor HTML updates can break scraping logic or trigger security defenses.

👉 Related:

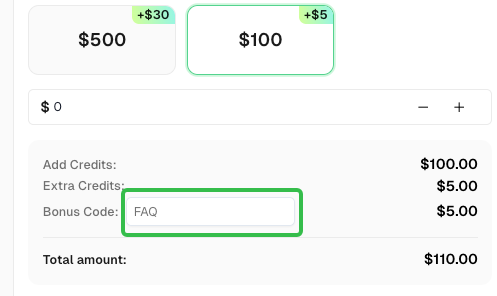

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.

CapSolver FAQ — capsolver.com