What Is Error 520 and How Can You Prevent It When Using Proxies?

Answer

Error 520 occurs when a reverse proxy receives an invalid, empty, or unexpected HTTP response from the origin server. In proxy or scraping environments, it is commonly caused by malformed headers, connection interruptions, or server-side instability. Preventing it requires stabilizing server responses, optimizing request headers, and ensuring compatibility between proxies and target infrastructure.

Detailed Explanation

Error 520 is a non-standard HTTP status typically generated when a reverse proxy layer receives a response it cannot interpret. This means the connection between the proxy and the origin server is established, but the response fails to meet HTTP protocol expectations.

In proxy-based scraping workflows, the request path becomes more complex: client → forward proxy → reverse proxy → origin server. Each layer introduces potential inconsistencies. For example, proxies may inject or modify headers such as X-Forwarded-For, which can exceed header size limits or break formatting rules.

Common triggers include oversized headers (often due to cookies), abrupt connection termination, invalid HTTP formatting, or server crashes during response generation. Additionally, security management systems may deliberately disrupt responses or close connections when detecting automated traffic, which also results in 520-like behavior.

Unlike typical 5xx errors, Error 520 does not indicate a specific failure type. Instead, it acts as a “catch-all” signal that something in the response pipeline is incompatible or unstable, making debugging more complex in automation environments.

Solutions / Methods

- Optimize HTTP headers and request structure:Ensure headers are correctly formatted and within size limits. Avoid excessive cookies or unnecessary metadata. When using proxies, verify they do not inject conflicting or oversized headers.

- Stabilize origin server behavior:Monitor server logs for crashes, timeouts, or malformed responses. Adjust timeout settings and ensure proper HTTP/2 or protocol configuration to prevent incomplete responses.

- Handle security protections intelligently:Many 520 errors during scraping are indirectly caused by security management systems. Using automated captcha solving services such as CapSolver can help maintain valid sessions and reduce abnormal responses triggered by bot detection mechanisms.

Best Practice / Tips

- Rotate proxies carefully to avoid inconsistent request fingerprints

- Keep request headers minimal and consistent across sessions

- Validate responses with retry logic and fallback mechanisms

- Combine proxy usage with browser automation tools for more realistic traffic patterns

👉 Related:

CapSolver FAQ — capsolver.com

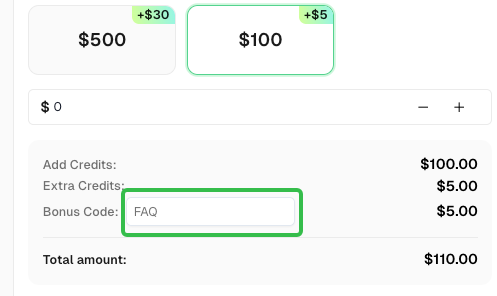

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.