Elevating Enterprise Automation: LLM-Powered Infrastructure for Seamless CAPTCHA Recognition & Operational Efficiency

Ethan Collins

Pattern Recognition Specialist

In the rapidly evolving landscape of digital transformation, CAPTCHAs have transitioned from basic security checks to sophisticated business process filters. While essential for security, they often introduce significant friction, creating an "efficiency gap" in automated workflows. Globally, enterprises collectively spend an estimated 500,000 hours daily on manual CAPTCHA resolution, hindering the seamless execution of critical business operations.

This manual intervention leads to several challenges:

- High Operational Costs: Reliance on human operators for CAPTCHA resolution scales poorly with increasing business volume.

- Process Disruptions: Automated scripts frequently halt upon encountering CAPTCHAs, breaking the continuity of business processes.

- Privacy & Technical Pressures: Evolving privacy standards pose challenges for traditional behavior-based verification methods, demanding more transparent and efficient solutions.

Our Vision: We believe CAPTCHAs should empower, not impede, business growth. By providing a cutting-edge AI Automation Infrastructure for Automated CAPTCHA Recognition, we are dedicated to helping enterprises significantly reduce manual intervention, optimize operational costs, and elevate the ecosystem efficiency of their core business processes.

📈 I. The Evolution of Verification: From Static Rules to Intelligent Synergy

The journey of verification technology over the past 25 years reflects a continuous pursuit of balance between security and user experience. The advent of Large Language Models (LLMs) marks a pivotal shift, ushering in a new era of intelligent, synergistic processing.

| Stage | Core Technology | Processing Logic | Business Impact |

|---|---|---|---|

| V1 (2000s) | Distorted Characters | Simple OCR Recognition | Vulnerable to basic automation, high initial efficiency |

| V2 (2014s) | Image Selection | Object Detection & Classification | Required extensive manual labeling, increased operational costs |

| V3 (2024s) | Behavioral Analysis | Risk Scoring & Fingerprinting | Faced privacy concerns, challenging for efficient automation |

| V4 (2026+) | LLM Synergy | Semantic Understanding & Generation | High Reliability, Enhanced Efficiency, Full Automation |

Key Insight: As CAPTCHAs move towards semantic and multimodal directions, traditional rule-based or hard-coded solutions are proving insufficient. Enterprises require an intelligent infrastructure with advanced semantic understanding capabilities to meet their automation needs. This is where LLM for CAPTCHA becomes indispensable.

🧠 II. LLM Empowerment: Core Capabilities of Automation Infrastructure

Integrating large models into the verification processing ecosystem makes them intelligent engines driving business process efficiency.

In this trend, some enterprise-oriented automation infrastructure platforms have begun to engineer LLM capabilities. For example, CapSolver provides stable CAPTCHA automation processing services by integrating multimodal recognition with large model inference capabilities, enabling enterprises to improve the continuity and execution efficiency of business processes without increasing manual intervention.

The core value of such solutions lies not in single-point capabilities, but in serving as underlying infrastructure to help enterprises maintain stable automation capabilities and controllable costs in an evolving verification environment.

2.1 Core Capability 1: Intelligent Risk Decision Engine

Traditional automation often relies on rigid if-else rules for CAPTCHA handling, leading to fragmented, hard-to-maintain, and easily bypassed systems. LLM-powered infrastructure acts as an intelligent risk decision engine, integrating diverse signals for unified, adaptive, and explainable processing.

Traditional Approach (Rule-Based):

python

# Traditional way

if ip_risk > 0.8 and device_new == True:

captcha_type = "hard"

elif behavior_score < 0.5:

captcha_type = "medium"

else:

captcha_type = "none"LLM-Powered Approach (Contextual Decision-Making):

python

# LLM way

context = {

"ip_reputation": "medium",

"device_fingerprint": "new_device",

"behavior_score": 0.65,

"request_frequency": "high",

"geo_location": "anomalous",

"historical_pattern": "deviation_detected"

}

# LLM Output: {"risk_level": "high", "captcha_type": "semantic_image",

# "difficulty": 0.8, "reason": "Device fingerprint conflicts with new IP geolocation"}Value Proposition:

- ✅ Reduced False Positives (20%+): Minimizing disruptions for legitimate users, enhancing user experience.

- ✅ Explainable Decisions: Providing auditable insights for security operations and continuous optimization.

- Dynamic Adaptability: Automatically adjusting to evolving verification challenges and business needs.

2.2 Core Capability 2: Generative Verification Engine

Traditional CAPTCHAs rely on limited question banks, making them susceptible to offline training and cracking by sophisticated automation. Leveraging generative AI, including Diffusion models, creates unique, dynamic verification challenges. Each instance is a novel creation, significantly increasing the cost and complexity for any attempt at pre-trained automation.

mermaid

graph TD

A[Traditional CAPTCHA] --> B{Limited Question Bank}

B --> C[Vulnerable to Offline Training/Cracking]

D[Generative Verification Engine] --> E{LLM + Diffusion Models}

E --> F[Infinite, Unique CAPTCHA Instances]

F --> G[Prohibitive Cost for Unauthorized Automation]Core Principle: Ensure the generalization cost for unauthorized automation exceeds the potential gains from bypassing the verification.

2.3 Core Capability 3: Deep Behavioral Sequence Analysis

While traditional behavioral analysis might flag simple patterns (e.g., straight mouse movements as robotic), LLMs can perform deep behavioral sequence analysis. By vectorizing user operation sequences and processing them through Transformer models, the system can discern subtle human-like nuances from overly perfect automated scripts.

Behavioral Sequence Analysis Flow:

mermaid

graph LR

A[User Operation Sequence] --> B[Embedding Vectorization]

B --> C[Transformer Encoding]

C --> D[Risk Scoring]

subgraph User Actions

E[Mouse Movement]

F[Click Position]

G[Dwell Time]

H[Page Scrolling]

I[Keyboard Rhythm]

end

E --> A

F --> A

G --> A

H --> A

I --> A

D --> J{LLM Judgment: "Hesitant Real User" vs. "Perfect Automated Script"}This allows the system to differentiate between a "hesitant, real user" and a "perfectly automated script," based on the inherent "human imperfections" in genuine interactions.

🗺️ III. Strategic Advantage: Optimizing Automation Costs with LLMs

The essence of effective automation is not absolute prevention, but making unauthorized bypass economically unfeasible. LLMs amplify this cost asymmetry, making legitimate automation more efficient and unauthorized automation prohibitively expensive.

Cost Comparison: Unauthorized Automation vs. Intelligent Infrastructure

| Cost Factor | Unauthorized Automation | Intelligent Infrastructure |

|---|---|---|

| Data Collection | High (for training) | Low (behavioral data acquisition) |

| Model Training | High (iterative training) | Medium (generative model deployment) |

| Adversarial Sample Generation | High | N/A |

| Effectiveness Lifespan | Low (CAPTCHA becomes obsolete) | High (dynamic strategy updates) |

| Detection Risk | High | Low |

| False Positive Handling | N/A | Medium (appeal processing) |

Conclusion: The operational costs for unauthorized automation are significantly higher than the sustainable costs of maintaining LLM-powered infrastructure, ensuring long-term, robust automation.

How LLMs Enhance Cost Optimization:

- Increased Generalization Cost: Generative CAPTCHAs create an infinite visual space, preventing pre-trained models.

- Increased Inference Cost: Semantic CAPTCHAs require multi-step reasoning, consuming significant computational resources for unauthorized attempts.

- Reduced Time-to-Live: Shorter CAPTCHA validity periods render cracked solutions obsolete before they can be widely deployed.

- Data Contamination: Dynamic obfuscation of real traffic with honeypot data pollutes training datasets for unauthorized automation.

🚀 IV. Future Outlook: Building a Seamless, Trust-Based Automation Ecosystem

We envision a future where verification is an invisible, continuous process, seamlessly integrated into the user experience.

4.1 Phase 1: LLM as an "Efficiency Co-pilot" (Current - Near Future)

In this initial phase, LLMs serve as an intelligent assistant, enhancing the efficiency of security operations rather than directly making critical decisions. They process complex verification logic, significantly reducing the frequency of manual intervention and providing actionable insights to human experts.

mermaid

graph TD

A[User Request] --> B{Traditional Verification System}

B --> C{CAPTCHA Encountered}

C --> D[LLM Co-pilot: Analyze CAPTCHA & Context]

D --> E{Human Security Expert: Review & Decision}

E --> F[Verification Outcome]

D -- "Suggests Solutions" --> E

E -- "Provides Feedback" --> DKey Principle: LLMs act as a co-pilot, augmenting human expertise to improve operational efficiency.

4.2 Phase 2: Dynamic Generative Verification (Near Future - Mid-term)

This phase combines LLMs with generative models (like Diffusion models) to create CAPTCHAs that are impossible to pre-train. Each verification instance is unique, ensuring that any successful bypass of one instance provides no advantage for subsequent attempts. Verification shifts from a "question bank extraction" model to "real-time creation."

mermaid

graph TD

A[User Access] --> B[LLM: Understand Page Context]

B --> C["Generative AI (Diffusion): Create Semantic CAPTCHA"]

C --> D[User: Solve Unique CAPTCHA]

D --> E[Verification Success/Failure]

subgraph Example CAPTCHA

F["This article mentions 3 cities, please mark their locations on the map."]

end

C --> FExample of a Future CAPTCHA:

User accesses a page → LLM understands page content → Generates a semantically relevant verification question.

- "This article mentions 3 cities; please mark their locations on the map."

This requires understanding article content, geographical knowledge, and image interaction, making automated bypass extremely costly, while remaining manageable for human users.

4.3 Phase 3: Continuous Trust Engine (Mid-term - Far Future)

The ultimate goal is the "disappearance" of explicit CAPTCHAs, replaced by a continuous, background trust assessment. Users no longer perceive a verification step, as the system constantly evaluates trust based on real-time behavioral signals.

mermaid

graph TD

A[User Opens App] --> B[Background: Collect Behavioral Signals]

B --> C[LLM: Real-time Trust Score Calculation]

C --> D{Trust Score > Threshold?}

D -- Yes --> E[Seamless Operations]

D -- No (Silent Degradation) --> F[Limited Functionality]

D -- No (Explicit Verification) --> G[Trigger CAPTCHA/Intervention]Hypothetical 2030 Verification Experience:

User opens App → Background continuously collects behavioral signals → LLM calculates real-time trust score.

- Trust Score > Threshold: All operations proceed seamlessly.

- Trust Score < Threshold: Certain functionalities are silently degraded.

- Trust Score << Threshold: Triggers explicit verification or intervention.

Users would never need to click "I am not a robot," achieving a truly seamless and efficient experience.

4.4 Beyond: Pioneering the Future of AI-Native Verification

We are also exploring advanced concepts, such as "AI-Specific CAPTCHAs" – designed to differentiate between human-assisted AI (e.g., users employing AI assistants) and purely automated scripts. As AI assistants become ubiquitous, this distinction will be crucial for maintaining fair and secure digital interactions.

⚠️ V. Ethics and Responsible AI Implementation

While LLMs offer unprecedented opportunities for efficiency, we emphasize a responsible approach to AI implementation, prioritizing transparency and ethical considerations:

mermaid

graph TD

A[LLM-Driven Automation] --> B{Transparency First}

A --> C{Cost Control}

A --> D["Safety Net: Human-in-the-Loop"]

B --> B1["Data Privacy Protection"]

B --> B2[Bias Mitigation]

B --> B3[Explainability Analysis]

C --> C1[Optimized Model Inference]

C --> C2[High ROI vs. Manual Processing]

D --> D1[Human Oversight]

D --> D2[Manual Review for Complex Scenarios]Key Considerations:

- Data Privacy: Ensuring all data collection and processing adheres to global privacy protection standards.

- Bias Mitigation: Continuously monitoring and mitigating potential biases in LLM-driven decision-making to ensure fairness.

- Transparency & Explainability: Providing clear insights into how LLMs make verification decisions, especially in cases of user friction.

- Human-in-the-Loop: Maintaining mechanisms for human oversight and intervention in complex or ambiguous scenarios.

Core Principle: AI-driven decisions are primary, with rule-based fallbacks and human-AI collaboration ensuring robust and ethical operation.

💡 VI. Actionable Strategies for Enterprises: Embracing Intelligent Automation

To harness the power of LLM-driven automation, enterprises can adopt the following strategies:

- 📊 Assess Current State: Evaluate existing verification systems for vulnerability to open-source OCR/detection models and analyze key metrics like false positive rates, user complaint rates, and automation success rates.

- 🧪 Pilot & Iterate: Begin with low-risk business lines to pilot "seamless verification" or "dynamic difficulty" solutions. Establish A/B testing frameworks to quantify the impact of new strategies.

- 📚 Stay Ahead of the Curve: Monitor advancements in generative AI (e.g., Diffusion models) and multimodal LLMs for their applications in verification and automation. Engage with industry security conferences (e.g., BlackHat, DEF CON, RSA) to stay informed.

- 🗄️ Data-Centric Approach: Start building high-quality datasets that capture "human-machine behavioral differences." Explore federated learning for collaborative data intelligence while preserving privacy.

- 👥 Cross-Functional Collaboration: Foster teams comprising AI engineers, security researchers, product managers, and experts. Conduct regular internal red-teaming exercises and establish knowledge-sharing mechanisms.

🎯 Conclusion: The Future of Verification is Seamless Efficiency

The 25-year history of CAPTCHAs reveals a cycle: AI creation → CAPTCHA for AI defense → AI bypasses CAPTCHA → CAPTCHA upgrades, frustrating humans → Humans train AI for free → AI becomes more powerful... The advent of LLMs, however, offers a paradigm shift.

With intelligent AI Automation Infrastructure, verification transcends being a mere obstacle. It transforms into a "Trust Membrane" seamlessly enveloping business operations, silently sensing risk, dynamically adjusting intensity, and striking an optimal balance between security and user experience.

The ultimate form of verification is "Seamless Efficiency." It's not the disappearance of security needs, but the invisible integration of verification. Our goal is to ensure that 90% of legitimate users never perceive a verification step, while 100% of unauthorized automation faces economically unsustainable costs.

As a leading global provider of Automated CAPTCHA Recognition solutions, we are committed to innovation that eliminates friction in business processes. We aim to build a smarter and more efficient automation ecosystem, empowering enterprises to focus on core growth, unburdened by verification challenges.

Start Building a More Efficient Automation System

If you are exploring how to achieve more stable and efficient automation processes in complex verification environments,

a reliable AI automation infrastructure will be key.

👉 Through CapSolver, you can:

- Achieve automated recognition and processing of mainstream CAPTCHA types

- Reduce manual intervention costs and improve process continuity

- Maintain stable success rates in dynamic verification environments

- Quickly integrate with existing business systems

Whether it's data collection, growth automation, or complex business process optimization,

CapSolver can serve as the underlying capability to help you build a more efficient automation system.

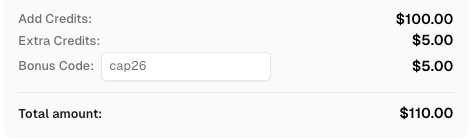

🎁 Exclusive Benefits

Use code

CAP26when signing up at CapSolver to receive bonus credits!

More

AIApr 28, 2026

AI Agents in Web Scraping & Competitive Intelligence Guide

Discover how AI agents transform web scraping and competitive intelligence. Learn about automated data collection, anti-bot challenges, and CAPTCHA solutions for scalable workflows.

AIApr 24, 2026

AI Agent vs Chatbot: Key Differences in Automation Capabilities

Discover the key differences between AI agent vs chatbot. Learn how agentic AI outperforms traditional AI in automation, decision-making, and complex workflows.