What Is Puppeteer and How Does It Work in Web Automation?

Answer

Puppeteer is a Node.js library that provides a high-level API to control headless Chrome or Chromium browsers programmatically. It enables developers to automate web interactions such as scraping data, testing applications, and generating screenshots by simulating real user behavior in a browser environment.

Detailed Explanation

Puppeteer works by communicating directly with a browser through the Chrome DevTools Protocol, allowing scripts to control browser actions like navigation, clicking elements, and executing JavaScript. Unlike traditional HTTP-based scraping tools, Puppeteer renders web pages fully, including dynamic JavaScript content, making it ideal for modern websites built with frameworks such as React or Vue.

By default, Puppeteer runs in headless mode, meaning the browser operates without a graphical interface. This improves speed and efficiency while still executing all page resources and scripts. Developers can automate tasks such as form submissions, UI testing, PDF generation, and full-page screenshots, all through simple JavaScript commands.

Because Puppeteer mimics real browser behavior, it is particularly useful for scraping complex, JavaScript-heavy websites. However, this also makes it subject to bot detection systems, which monitor browser fingerprints, behavior patterns, and interaction timing.

Solutions / Methods

- Use Puppeteer for Dynamic Web Scraping:Leverage its ability to render JavaScript-heavy pages, interact with DOM elements, and extract structured data from modern web applications that traditional scrapers cannot handle.

- Combine with Proxy and Anti-Detection Techniques:Integrate rotating proxies, user-agent spoofing, and browser fingerprint management to reduce detection risks when running automation at scale.

- Integrate CAPTCHA Solving Services:When automation encounters CAPTCHA challenges (e.g., reCAPTCHA or Cloudflare Turnstile), solutions like CapSolver can help automatically solve them, ensuring uninterrupted scraping workflows and improving success rates in protected environments.

Best Practice / Tips

- Always implement proper waiting strategies (e.g.,

waitForSelector) to ensure elements are fully loaded before interaction. - Use headful mode during debugging to visually inspect automation behavior.

- Limit request rates and randomize actions to better simulate human browsing patterns.

- Monitor response status codes and implement retry logic for stability.

👉 Related:

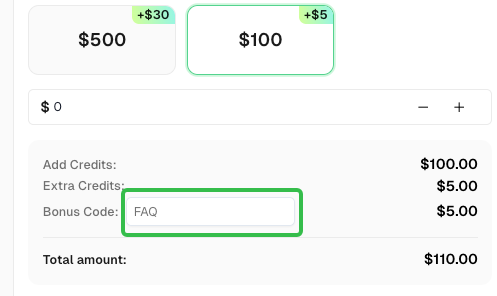

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.

CapSolver FAQ — capsolver.com