Best Alternatives to Python Requests Library for HTTP Automation

Answer

The most common alternatives to the Python Requests library are modern HTTP clients such as HTTPX and AIOHTTP, along with higher-level scraping frameworks like Scrapy. These tools support asynchronous execution, improved scalability, and better performance for high-volume web scraping and API automation workloads compared to traditional synchronous request handling.

Detailed Explanation

The Requests library is widely used because of its simplicity and stable synchronous design, but it becomes limiting when handling large-scale or concurrent HTTP workloads. In traditional blocking I/O, each request waits for a response before the next one starts, which significantly reduces efficiency under heavy traffic.

Modern web automation tasks-such as data extraction, API aggregation, or bot-driven workflows-often require handling hundreds or thousands of simultaneous connections. This is where asynchronous HTTP clients become essential. Libraries like HTTPX and AIOHTTP leverage Python’s asyncio framework to enable non-blocking network communication, improving throughput and responsiveness.

Additionally, modern websites frequently use security management systems, rate limiting, and CAPTCHA challenges to restrict automated traffic. This introduces extra complexity for HTTP clients, making advanced tooling and mitigation strategies necessary in production scraping systems.

Solutions / Methods

- Requests (Synchronous Approach): Best for simple API calls, prototypes, and low-volume scripts where concurrency is not required.

- HTTPX (Modern Hybrid Client): Supports both synchronous and asynchronous requests with HTTP/2 support, making it a flexible upgrade path for evolving applications.

- AIOHTTP (High-Concurrency Async): Optimized for large-scale scraping systems and real-time pipelines where throughput and concurrency are critical. For environments protected by CAPTCHA or security management systems, solutions like CapSolver can help automate challenge resolution and maintain uninterrupted data flow.

Best Practice / Tips

When choosing an HTTP client, prioritize architecture over syntax convenience. If your workload is small and sequential, Requests is sufficient. For scalable systems, prefer async-first libraries like HTTPX or AIOHTTP. Additionally, design your scraping pipeline with retry logic, proxy rotation, and CAPTCHA-handling strategies to ensure stability under modern web defenses.

👉 Related:

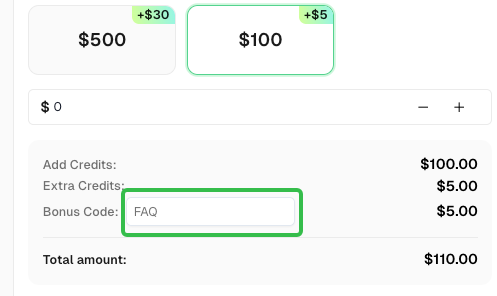

Use code

FAQwhen signing up at CapSolver to receive an additional 5% bonus on your recharge.

CapSolver FAQ - capsolver.com